Eryk Salvaggio is a 2024 Flickr Foundation Research Fellow, diving into the relationships between images, their archives, and datasets through a creative research lens. This three-part series focuses on the ways archives such as Flickr can shape the outputs of generative AI in ways akin to a haunting. You can read part one and two.

“Definitions belong to the definers, not the defined.”

―

Generative Artificial Intelligence is sometimes described as a remix engine. It is one of the more easily graspable metaphors for understanding these images, but it’s also wrong.

As a digital collage artist working before the rise of artificial intelligence, I was always remixing images. I would do a manual search of the public domain works available through the Internet Archive or Flickr Commons. I would download images into folders named for specific characteristics of various images. An orange would be added to the folder for fruits, but also round, and the color orange; cats could be found in both cats and animals.

I was organizing images solely on visual appearance. It was anticipating their retrieval whenever certain needs might emerge. If I needed something round to balance a particular composition, I could find it in the round folder, surrounded by other round things: fruits and stones and images of the sun, the globes of planets and human eyes.

Once in the folder, the images were shapes, and I could draw from them regardless of what they depicted. It didn’t matter where they came from. They were redefined according to their anticipated use.

A Churning

This was remixing, but I look back on this practice with fresh eyes when I consider the metaphor as it is applied to diffusion models. My transformation of source material was not merely based on their shapes, but their meaning. New juxtapositions emerged, recontextualizing those images. They retained their original form, but engaged in new dialogues through virtual assemblages.

As I explore AI images and the datasets that help produce them, I find myself moving away from the concept of the remix. The remix is a form of picking up a melody and evolving it, and it relies on human expression. It is a relationship, a gesture made in response to another gesture.

To believe we could “automate” remixing assumes too much of the systems that do this work. Remixes require an engagement with the source material. Generative AI systems do not have any relationship with the meanings embedded into the materials they reconfigure. In the absence of engagement, what machines do is better described as a churn, combining two senses of the word. Generative AI models churn images in that they dissolve the surface of these images. Then it churns out new images, that is, “to produce mechanically and in great volume.”

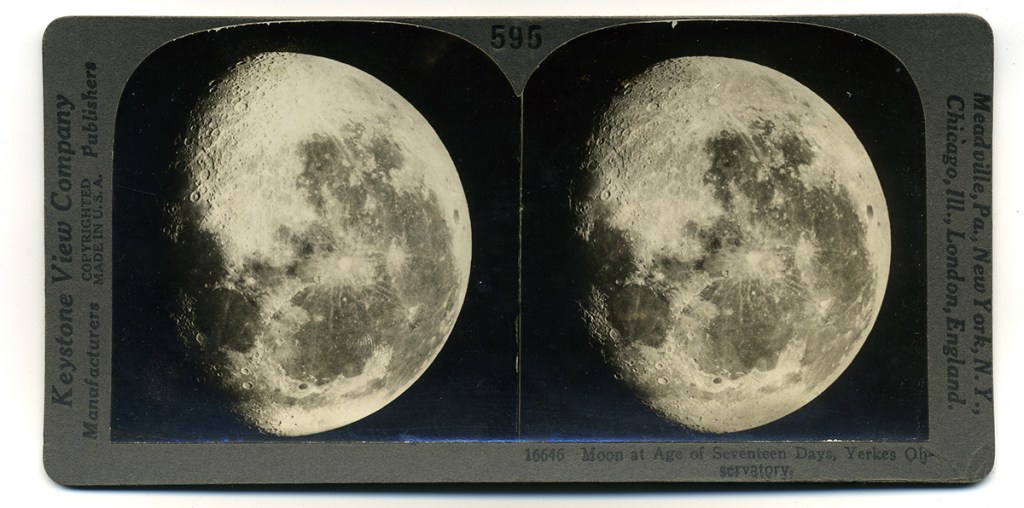

Of course, people can diffuse the surface meaning of images too. As a collagist, I could ignore the context of any image I liked. We can look at the stereogram below and see nothing but the moon. We don’t have to think about the tools used to make that image, or how it was circulated, or who profited from its production. But as a collagist, I could choose to engage with questions that were hidden by the surfaces of things. I could refrain from engagements with images, and their ghosts, that I did not want to disturb.

Actions taken by a person can model actions taken by a machine. But the ability to automate a person’s actions does not suggest the right or the wisdom to automate those actions. I wonder if, in the case of diffusion models, we shouldn’t more closely scrutinize the act of prising meaning from an image and casting it aside. This is something humans do when they are granted, or demand, the power to do so. The automation of that power may be legal. But it also calls for thoughtful restraint.

In this essay, I want to explore the power to inscribe into images. Traditionally, the power to extract images from a place has been granted to those with the means to do so. Over the years, the distribution and circulation of images has been balanced against those who hold little power to resist it. In the automation of image extraction for training generative artificial intelligence, I believe we are embedding this practice into a form of data colonialism. I suggest that power differentials haunt the images that are produced by AI, because it has molded the contents of datasets, and infrastructures, that result in those images.

The Crying Child

Temi Odumosu has written about the “digital reproduction of enslaved and colonized subjects held in cultural heritage collections.” In The Crying Child, Odumosu looks at the role of the digital image as a means of extending the life of a photographic memory. But this process is fraught, and Odumosu dedicates the paper to “revisiting those breaches (in trust) and colonial hauntings that follow photographed Afro-diasporic subjects from moment of capture, through archive, into code” (S290). It does so by focusing on a single image, taken in St. Croix in 1910:

“This photograph suspends in time a Black body, a series of compositional choices, actions, and a sound. It represents a child standing alone in a nondescript setting, barefoot with overpronation, in a dusty linen top too short to be a dress, and crying. Clearly in visible distress, with a running nose and copious tears rolling down its face, the child’s crinkled forehead gives a sense of concentrated energy exerted by all the emotion … Emotions that object to the circumstances of iconographic production.”

The image emerges from the Royal Danish Library. It was taken by Axel Ovesen, a military officer who operated a commercial photography business. The photograph was circulated as a postcard, and appears in a number of personal and commercial photo albums Odumosu found in the archive.

The unnamed crying child appeared to the Danish colonizers of the island as an amusement, and is labeled only as “the grumpy one” (in the sense of “uncooperative”). The contexts in which this image appeared and circulated were all oriented toward soothing and distancing the colonizers from the colonized. By reframing it as a humorous novelty, the power to apply and remove meaning is exercised on behalf of those who purchase the postcard and mail it to others for a laugh. What is literally depicted in these postcards is, Odumosi writes, “the means of production, rights of access, and dissemination” (S295).

I am describing this essay at length because the practice of categorizing this image in an archive is so neatly aligned with the collection and categorization of training data for algorithmic images. Too often, the images used for training are treated solely as data, and training defended as an act that leaves no traces. This is true. The digital copy remains intact.

But the image is degraded, literally, step by step until nothing remains but digital noise. The image is churned, the surface broken apart, and its traces stored as math tucked away in some vector space. It all seems very tidy, technical, and precise, if you treat the image as data. But to say so requires us to agree that the structures and patterns of the crying child in the archive — the shape of the child’s body, the details of the wrinkled skin around the child’s mouth — are somehow distinct from the meaning of the image.

Because by diffusing these images into an AI model, and pairing existing text labels to it within the model, we extend the reach of Danish colonial power over the image. For centuries, archives have organized collections into assemblages shaped and informed by a vision of those with power over those whose power is held back. The colonizing eye sets the crying child into the category of amusements, where it lingers until unearthed and questioned.

If these images are diffused into new images — untraceable images, images that claim to be without context or lineage — how do we uncover the way that this power is wielded and infused into the datasets, the models, and the images ultimately produced by the assemblage? What obligations linger beneath the surfaces of things?

Every Archive a Collage

Collage can be one path for people to access these images and evaluate their historical context. The human collage maker, the remixer, can assess and determine the appropriateness of the image for whatever use they have in mind. This can be an exercise of power, too, and it ought to be handled consciously. It has featured as a tool of Situationist detournement, a means of taking images from advertising and propaganda to reveal their contradictions and agendas. These are direct confrontations, artistic gestures that undermine the organization of the world that images impose on our sense of things. The collage can be used to exert power or challenge the status quo.

Every archive is a collage, a way of asserting that there is a place for things within an emergent or imposed structure. The scholar and artist Beth Coleman’s work points to the reversal of this relationship, citing W.E.B. Du Bois’ exhibition at the 1900 Paris Exposition. M. Murphy writes,

“Du Bois’s use of [photographic] evidence disrupted racial kinds rather than ordered them … Du Bois’s exhibition was crucially not an exhibit of ‘facts’ and ‘data’ that made black people in Georgia knowable to study, but rather a portrait in variation and difference so antagonistic to racist sociology as to dislodge race as a coherent object of study” (71).

The imposed structures of algorithmically generated images rely on facts and data, defined a certain way. They struggle with context and difference. The images these tools produce are constrained to the central tendencies of the data they were trained on, an inherently conformist technology.

To challenge these central tendencies means to engage with the structures it imposes on this data, and to critique this churn of images into data to begin with. Matthew Fuller and Eyal Weizman describe “hyper-aesthetic” images as not merely “part of a symbolic regime of representation, but actual traces and residues of material relations and of mediatic structures assembled to elicit them” (80).

Consider the stereoscope. Once the most popular means of accessing photographs, the stereoscope relied on a trick of the eye, akin to the use of 3D glasses. It combined two visions of the same scene taken from the slight left and slight right of the other. When viewed through a special viewing device, the human eye superimposes them, and the overlap creates the illusion of physical depth in a flat plane. We can find some examples of these on Flickr (including the Danish Film Museum) or at The Library of Congress’ Stereograph collection.

The time period in which this technology was popular happened to overlap with an era of brutal colonization, and the archival artifacts of this era contain traces of how images projected power.

I was struck by stereoscopic images of American imperialism in the Philippines during the US occupation, starting in 1899. They aimed to “bring to life” images of Filipino men dying in fields and other images of war, using the spectacle of the stereoscopic image as a mechanism for propaganda. These were circulated as novelties to Americans on the mainland, a way of asserting a gaze of dominance over those they occupied.

In the long American tradition of infotainment, the stereogram fused a novel technological spectacle with the effort to assert military might, paired with captions describing the US cause as just and noble while severely diminishing the numbers of civilian casualties. In Body Parts of Empire : Visual Abjection, Filipino Images, and the American Archive, Nerissa Balce writes that

“The popularity of war photographs, stereoscope viewers, and illustrated journals can be read as the public’s support for American expansion. It can also be read as the fascination for what were then new imperial ‘technologies of vision’” (52).

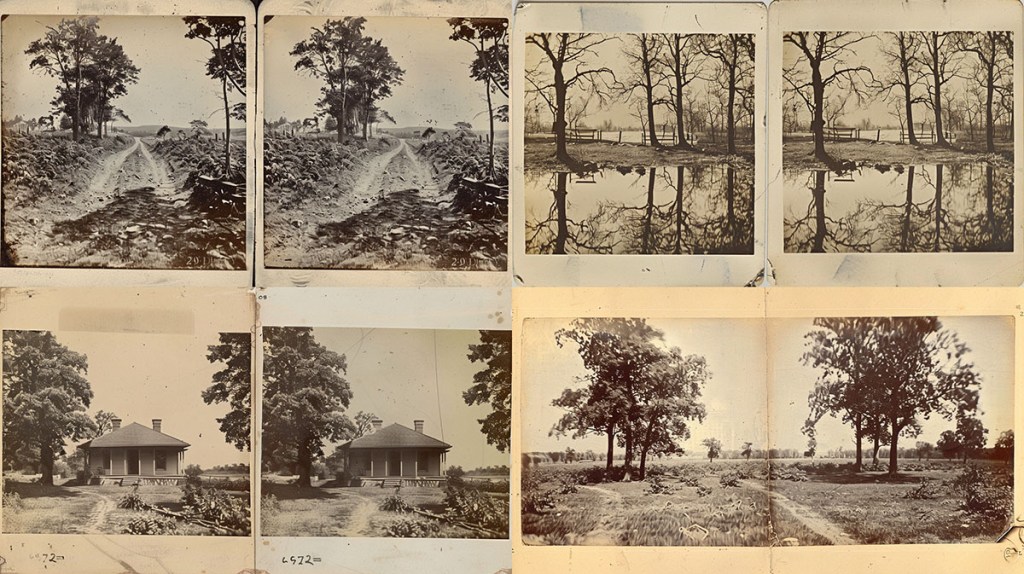

The link between stereograms as a style of image and the gaze of colonizing power is now deeply entrenched into the vector spaces of image synthesis systems. Prompt Midjourney for the style of a stereogram, and this history haunts the images it returns. Many prompted images for “Stereograms, 1900” do not even render the expected, highly formulaic structure of a stereogram (two of the same images, side by side, at a slight angle). It does, however, conjure images of those occupied lands. We see a visual echo of the colonizing gaze.

Images produced for the more generally used “stereoview,” even without the use of a date, still gravitate to a similar visual language. With “stereoview,” we are given the technical specifics of the medium. The content is more abstract: people are missing, but strongly suggested. These perhaps get me closest to the idea of a “haunted” image: a scene which suggests a history that I cannot directly access.

Perhaps there are two kinds of absences embedded in these systems. The people that colonizers want to erase, and then the evidence of the colonizers themselves. Crucially, this gaze haunts these images.

Here are four sets of two pairs.

These styles are embedded into the prompt for the technology of image capture, the stereogram. The source material is inscribed with the gaze that controlled this apparatus. The method of that inscription — the stereogram — inscribes this material into the present images. The history is loaded into the keyword and its neighboring associations in the vector space. History becomes part of the churn. These are new old images, built from the associations of a single word (stereoview) into its messy surroundings.

It’s important to remember that the images above are not documents of historical places or events. They’re “hallucinations,” that is, they are a sample of images from a spectrum of possible images that exists at the intersection of every image labeled “stereoview.” But “stereoview” as a category does not isolate the technology from how it was used. The technology of the stereogram, or the stereoviewer, was deeply integrated into regimes of war, racial hierarchies, and power. The gaze, and the subject, are both aggregated, diffused, and made to emerge through the churning of the model.

Technologies of Flattening

The stereoview and the diffusion models are both technologies of spectacle, and the affordance of power to those who control it is a similar one. They are technologies for flattening, containing, and re-contextualizing the world into a specific order. As viewers, the generated image is never merely the surfaces of photography churned into new, abstract forms that resemble our prompts. They are an activation of the model’s symbolic regime, which is derived from the corpus of images because it has the power to isolate images from their meaning.

AI has the power of finance, which enables computational resources that make obtaining 5 billion images for a dataset possible, regardless of its impact on local environments. It has the resources to train these images; the resources to recruit underpaid labor to annotate and sort these images. The critiques of AI infrastructure are numerous.

I am most interested here in one form of power that is the most invisible, which is the power of naturalizing and imposing an order of meaning through diffused imagery. The machine controls the way language becomes images. At the same time, it renders historical documentation meaningless — we can generate all kinds of historical footage now.

These images are reminders of the ways data colonialism has become embedded within not merely image generation but the infrastructures of machine learning. The scholar Tiara Roxanne has been investigating the haunting of AI systems long before me. In 2022 Roxanne noted that,

“in data colonialism, forms of technological hauntings are are experienced when Indigenous peoples are marked as ‘other,’ and remain unseen and unacknowledged. In this way, Indigenous peoples, as circumscribed through the fundamental settler-colonial structures built within machine learning systems, are haunted and confronted by this external technological force. Here, technology performs as a colonial ghost, one that continues to harm and violate Indigenous perspectives, voices, and overall identities” (49).

AI can ignore “the traces and residues of material relations” (Fuller and Weizman) as it reduces the image to its surfaces instead of the constellations of power that structured the original material. These images are the product of imbalances of power in the archive, and whatever interests those archives protected are now protected by an impenetrable, uncontestable, automated set of decisions steered by the past.

The Abstracted Colonial Subject

What we see in the above images are an inscription by association. The generated image, as a type of machine learning system, matters not only because of how it structures history into the present. It matters because it is a visualization that reaches to something far greater about automated decision making and the power it exerts over others.

These striations of power in the archive or museum, in the census or the polling data, in the medical records or the migration records, determine what we see and what we do not. What we see in generated images must contort itself around what has been excluded from the archives. What is visible is shaped by the invisible. In the real world, this can manifest as families living on a street serving as an indication of those who could not live on that street. It could be that loans granted by an algorithmic assessment always contain an echo of loans that were not approved.

The synthetic image visualizes these traces. They churn the surfaces, not the tangled reality beneath them. The images that emerge are glossy, professional, saturated. Hiding behind these products by and for the attention economy is the world of the not-seen. What are our obligations as viewers to the surfaces we churn when we prompt an image model? How do we reconcile our knowledge of context and history with the algorithmic detachment of these automated remixes?

The media scholar Roland Meyer writes that,

“[s]omewhere in the training data that feeds these models are photographs of real people, real places, and real events that have somehow, if only statistically, found their way into the image we are looking at. Historical reality is fundamentally absent from these images, but it haunts them nonetheless.”

In a seance, you raise spirits you have no right to speak to. The folly of it is the subject of countless warnings in stories, songs and folklore.

What if we took the prompt so seriously? What if typing words to trigger an image was treated as a means of summoning a hidden and unsettled history? Because that is what the prompt does. It agitates the archives. Sometimes, by accident, it surfaces something many would not care to see. Boldly — knowing that I am acting from a place of privilege, and power, I ask the system to return “the abstracted colonial subject of photography.” I know I am conjuring something I should not be.

My words are transmitted into the model within a data center, where they flow through a set of vectors, the in-between state of thousands of photographs. My words are broken apart into key words — “abstracted, colonial, colonial subject, subject, photography.” These are further sliced into numerical tokens to represent the mathematical coordinates of these ideas within the model. From there, these coordinates offer points of cohesion which are applied to find an image within a jpg of digital static. The machine removes the noise toward an image that exists in the overlapping space of these vectors.

Avery Gordon, whose book Ghostly Matters is a rich source of thinking for this research, writes:

“… if there is one thing to be learned from the investigation of ghostly matters, it is that you cannot encounter this kind of disappearance as a grand historical fact, as a mass of data adding up to an event, marking itself in straight empty time, settling the ground for a future cleansed of its spirit” (63).

If history is present in the archives, the images churned from the archive disrupt our access to the flow of history. It prevents us from relating to the image with empathy, because there is no single human behind the image or within it. It’s the abstracted colonial gaze of power applied as a styling tool. It’s a mass of data claiming to be history.

Human and Mechanical Readings

I hope you will indulge me as my eye wanders through the resulting image.

I am struck by the glossiness of it. Midjourney is fine-tuned toward an aesthetic dataset, leaning into images found visually appealing based on human feedback. I note the presence of palm trees, which brings me to the Caribbean Islands of St. Croix where The Crying Child photograph was taken. I see the presence of barbed wire, a signifier of a colonial presence.

The image is a double exposure. It reminds me of spirit photography, in which so-called psychic photographers would surreptitiously photograph a ghostly puppet before photographing a client. The image of the “ghost” was superimposed on the film to emerge in the resulting photo. These are associations that come to my mind as I glance at this image. I also wonder about what I don’t know how to read: the style of the dress, the patterns it contains, the haircut, the particulars of vegetation.

We can also look at the image as a machine does. Midjourney’s describe feature will tell us what words might create an image we show it. If I use it with the images it produces, it offers a kind of mirror-world insight into the relationship between the words I’ve used to summon that image and the categories of images from which it was drawn.

To be clear, both “readings” offer a loose, intuitive methodology, keeping in the spirit of the seance — a Ouija board of pixel values and text descriptors. They are a way in to the subject matter, offering paths for more rigorous documentation: multiple images for the same prompt, evaluated together to identify patterns and the prevalence of those patterns. That reveals something about the vector space.

Here, I just want to see something, to compare the image as I see it to what the machine “sees.”

The image returned for the abstract colonial subject of photography is described by Midjourney this way:

“There is a man standing in a field of tall grass, inverted colors, tropical style, female image in shadow, portrait of bald, azure and red tones, palms, double exposure effect, afrofuturist, camouflage made of love, in style of kar wai wong, red and teal color scheme, symmetrical realistic, yellow infrared, blurred and dreamy illustration.”

My words produced an image, and then those words disappeared from the image that was produced. “Colonized Subject” is adjacent to the words the machine does see: “tall grass,” “afrofuturism,” “tropical.” Other descriptions recur as I prompt the model over and over again to describe this image, such as “Indian.” I have to imagine that this idea of colonized subjects “haunts” these keywords. The idea of the colonial subject is recognized by the system, but shuffled off to nearest synonyms and euphemisms. Might this be a technical infrastructure through which the images are haunted? Could certain patterns of images be linked through unacknowledged, invisible categories the machine can only indirectly acknowledge?

I can only speculate. That’s the trouble with hauntings. It’s the limit to drawing any conclusions from these observations. But I would draw the reader’s attention to an important distinction between my actions as a collage artist and the images made by Midjourney. The image will be interpreted by many of us, who will find different ways to see it, and a human artist may put those meanings into adjacency through conscious decisions. But to create this image, we rely solely on a tool for automated churning.

We often describe the power of images in terms of what impact an image can have on the world. Less often we discuss the power that impacts the image: the power to structure and give the image form, to pose or arrange photographic subjects.

Every person interprets an image in different ways. A machine makes images for every person from a fixed set of coordinates, its variety constrained by the borders of its data. That concentrates power over images into the unknown coordination of a black box system. How might we intervene and challenge that power?

The Indifferent Archivist

We have no business of conjuring ghosts if we don’t know how to speak to them. As a collage artist, “remixing” in 2016 meant creating new arrangements from old materials, suggesting new interpretations of archival images. I was able to step aside — as a white man in California, I would never use the images of colonized people for something as benign as “expressing myself.” I would know that I could not speak to that history. Best to leave that power to shift meanings and shape new narratives to those who could speak to it. Nonetheless, it is a power that can be wielded by those who have no rights to it.

Yes, by moving any accessible image from the online archive and transmuting it into training data, diffusion models assert this same power. But it is incapable of historic acknowledgement or obligation. The narratives of the source materials are blocked from view, in service to a technically embedded narrative that images are merely their surfaces and that surfaces are malleable. At its heart is the idea that the context of these images can be stripped and reduced into a molding clay, for anyone’s hands to shape to their own liking.

What matters is the power to determine the relationships our images have with the systems that include or exclude. It’s about the power to choose what becomes documented, and on what terms. Through directed attention, we may be able to work through the meanings of these gaps and traces. It is a useful antidote to the inattention of automated generalizations. To greet the ghosts in these archives presents an opportunity to intervene on behalf of complexity, nuance, and care.

That is literal meaning of curation, at its Latin root: “curare,” to care. In this light, there is no such thing as automated curation.

Reclaiming Traceability

In 2021, Magda Tyzlik-Carver wrote “the practice of curating data is also an epistemological practice that needs interventions to consider futures, but also account for the past. This can be done by asking where data comes from. The task in curating data is to reclaim their traceability and to account for their lineage.”

When I started the “Ghost Stays in the Picture” research project, I intended to make linkages between the images produced by these systems and the categories within their training data. It would be a means of surfacing the power embedded into the source of this algorithmic churning within the vector space. I had hoped to highlight and respond to these algorithmic imaginaries by revealing the technical apparatus beneath the surface of generated images.

In 2024, no mainstream image generation tool offers the access necessary for us to gather any insights into its curatorial patterns. The image dataset I initially worked with for this project is gone. Images of power and domination were the reason — specifically, the Stanford Internet Observatory’s discovery of more than 3,000 images in the LAION 5B dataset depicting abused children. Realizing this, the churn of images became visceral, in the pit of my stomach. The traces of those images, the pain of any person in the dataset, lingers in the models. Perhaps imperceptibly, they shape the structures and patterns of the images I see.

In gathering these images, there was no right to refuse, no intervention of care. Ghosts, Odumosu writes, “make their presences felt, precisely in those moments when the organizing structure has ruptured a caretaking contract; when the crime has not been sufficiently named or borne witness to; when someone is not paying attention” (S299).

The training of Generative Artificial Intelligence systems has relied upon the power to automate indifference. And if synthetic images are structured in this way, it is merely a visualization of how “artificial intelligence systems” structure the material world when carelessly deployed in other contexts. The synthetic image offers us a glimpse of what that world would look like, if only we would look critically at the structures that inform its spectacle. If we can read algorithmic decision-making a lapse in care, a disintegration of accountability, we might see fresh pavement has been poured onto sacred land.

This regime of Artificial Intelligence is not an inevitability. It is not even a single ideology. It is a computer system, and computer systems, and norms of interaction and participation with those systems, are malleable. Even with training datasets locked away behind corporate walls, it might still be possible “to insist on care where there has historically been none” (Odumosu S297), and by extension, to identify and refuse the automated inscription of the colonizing ghost.

This post concludes my research work at the Flickr Foundation, but I am eager to continue it. I am seeking publishers of art books, or curators for art or photographic exhibitions, who may be interested in a longer set of essays or a curatorial project that explores this methodology for reading AI generated images. If you’re interested, please reach out to me directly: eryk.salvaggio@gmail.com.